In recent years, as artificial intelligence systems evolve beyond simple automation, a new paradigm has emerged: agentic reasoning. This approach enables intelligent systems to go beyond reactive response, to form goals, reason about actions, adapt based on feedback, and operate with purpose. In other words, these systems act more like autonomous agents than passive tools.

Agentic reasoning represents a shift in how we design AI: rather than commanding systems with rigid scripts or workflows, we imbue them with the capacity to deliberate, prioritize, and adjust in dynamic environments. This shift is especially significant when tackling complex, ambiguous tasks that require contextual understanding, flexibility, and long-term planning.

In this article, we will explore:

-

The foundations of agentic reasoning

-

Its core components

-

Implementation strategies

-

Real-world applications

-

Challenges and caveats

-

Future trajectories and best practices

By the end, you should have a clear grasp of what agentic reasoning means, why it matters, and how to think about integrating it into real systems.

What Is Agentic Reasoning?

At its core, agentic reasoning is the ability of a system or model to formulate internal goals, plan actions, execute them, observe outcomes, and revise its strategy—all without requiring step-by-step instructions for every move.

That is, instead of being told exactly what to do next, an agentic system has:

-

Initiative: It can recognize opportunities or problems and decide what matters next.

-

Autonomy: It doesn’t wait on human prompts for every step; it acts proactively.

-

Context awareness: It understands and reasons about the data, constraints, and environment around it.

-

Adaptivity: It updates strategies based on feedback, errors, or shifting objectives.

In contrast, “non-agentic” or reactive systems tend to follow templates or fixed logic: they wait for user inputs, invoke predetermined routines, and lack flexibility in ambiguous or evolving contexts. Agentic reasoning bridges the gap between human-level deliberation and machine execution.

As the complexity of real-world tasks grows—projects with shifting dependencies, cross-team coordination, fragmented data sources—the limitations of rigid logic become apparent. What’s needed is not only execution but reasoning: making judgment calls, reordering priorities, dynamically adjusting plans.

If you imagine a human project manager, part of their role is to observe, reprioritize, pivot strategy, and manage tradeoffs on the fly. Agentic reasoning aims to encapsulate that kind of meta-thinking within intelligent systems.

Core Components of Agentic Reasoning

To build systems capable of agentic behavior, there are essential building blocks. These components, working together, let an agent reason, act, and adapt.

1. Goal Formulation

The agent needs a starting point: an objective or mission. But unlike simple instructions (“do X, then Y”), agentic systems often generate or refine goals internally, based on context, emerging data, or higher-level directives.

Goals may be:

-

Externally provided (e.g. “increase customer satisfaction by X%”)

-

Discovered internally (e.g. noticing recurring support issues and deciding to reduce response time)

-

Hierarchical (high-level mission broken into subgoals)

Because goals can evolve, the agent must continuously evaluate whether they remain relevant and switch or refine them when necessary.

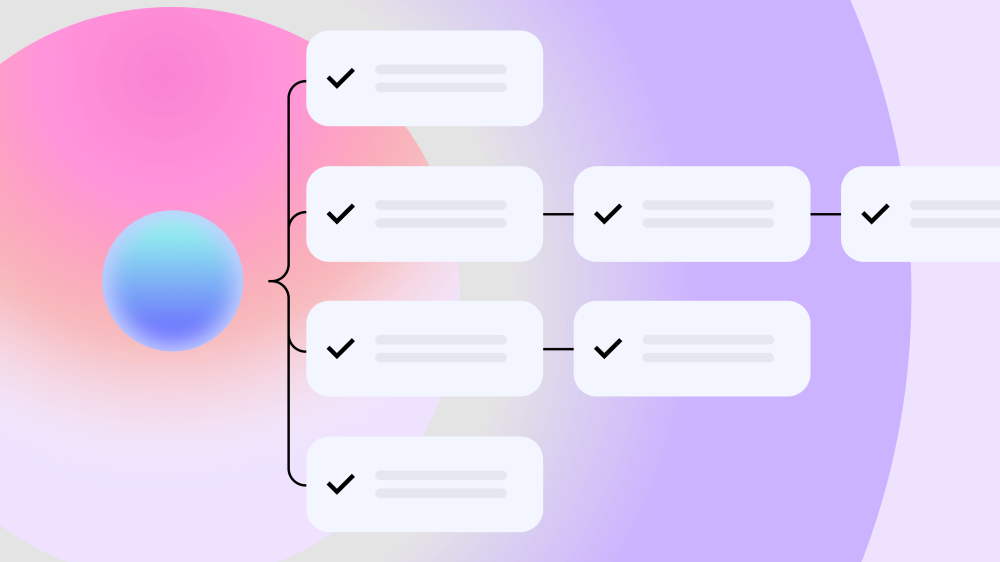

2. Planning & Decomposition

Once a goal exists, the system needs to break it into actionable units—tasks, subtasks, decision branches, dependencies. This involves:

-

Understanding constraints (resources, time, dependencies)

-

Sequencing steps optimally

-

Considering parallelism vs. serial work

-

Weighing tradeoffs (speed vs. precision, short-term vs. long-term)

A good planning engine allows the agent to reason about partial orderings and contingencies—rather than forcing a strict linear script.

3. Contextual Memory & Feedback

Memory is essential. An agent must remember what has been done, what outcomes resulted, what decisions succeeded or failed, and what external changes occurred. This memory layer enables:

-

Tracking long-term progress

-

Learning from past actions

-

Avoiding repeating poor decisions

-

Reusing useful strategies

Without memory, an agent becomes myopic—reacting freshly on every request, unable to accumulate wisdom.

4. Adaptive Execution

Planning is not enough; execution must be dynamic. As tasks are executed, the agent should monitor results, detect deviations, and adjust subsequent actions accordingly. Execution and reasoning loops must exist in tandem.

For example:

-

If a subtask fails, the agent might re-plan the path

-

If data quality is poor, re-prioritize cleaning or sourcing better data

-

If constraints tighten, revise resource allocation

This ability to course-correct is what distinguishes agentic systems from one-shot scripts.

5. Control Loops & Oversight

Closely tied to execution is the need for supervisory loops: mechanisms to check alignment, enforce constraints, intervene when things go off track, and allow human override. A purely autonomous system is risky; one must balance autonomy with guardrails.

Agents must have decision nodes where they evaluate: Am I still aligned with goals? Are actions safe or permissible? If not, they either re-think or escalate.

How to Implement Agentic Reasoning

Designing an agentic system is as much about architecture and environment as it is about modeling. Here’s a step-by-step perspective.

Step 1: Define Decision Boundaries, Not Scripts

Instead of prescribing exact steps, give the agent boundaries:

-

What resources it can access

-

What goals it should pursue

-

What tradeoffs it should consider

-

Safety or compliance constraints

These boundaries guide agent decisions without freezing them into inflexible rules.

Step 2: Build a Reasoning Engine (Planner + Controller)

The heart of an agentic stack is the reasoning engine. Key modules include:

-

Planner / decomposer: splits goals into tasks or subtasks

-

Memory / knowledge store: records states, outcomes, context

-

Control loop / monitor: evaluates progress, triggers rethinking

-

Evaluator / scorer: ranks alternative paths or decisions

This reasoning engine becomes the “brain” that dynamically sequences tasks, monitors outcomes, and updates plans.

Step 3: Use an Environment That Supports Adaptivity

Agents can’t thrive on rigid, linear systems. The surrounding infrastructure must allow:

-

Dynamic task reordering

-

On-the-fly prioritization changes

-

Cross-functional triggers (i.e. tasks can cascade or shift)

-

Data connectivity across tools

If you bolt an agentic module onto a monolithic, non-reactive stack, it hits a ceiling quickly.

Step 4: Integrate Feedback Loops

To get smarter over time, agentic systems require well-established feedback mechanisms:

-

Log successes and failures, with metrics

-

Capture qualitative commentary (why this path failed)

-

Feed back into evaluators or learning modules

-

Periodically review and refine logic based on results

These feedback loops are essential so that the agent can self-correct and evolve.

Step 5: Start Small, Grow Safely

Full autonomy is dangerous early on. A safer rollout might:

-

Begin with constrained subdomains or domains of low risk

-

Keep human oversight, audit logs, and reviewable decisions

-

Make agent decisions visible to humans, with explanations

-

Gradually increase autonomy as trust, performance, and transparency improve

This incremental adoption helps teams adapt cultural and technical practices in tandem.

Real-World Applications & Use Cases

Agentic reasoning is more than a theoretical ideal. Several real deployments show how it can transform workflows, operations, and decision-making.

1. Product Delivery & Project Management Agents

In agile or cross-functional teams, agentic systems monitor product backlogs, sprint velocities, dependencies, and scope creep across tools (e.g. version control, issue trackers). They:

-

Detect when stories are getting delayed

-

Suggest trimming scope or redistributing tasks

-

Flag risk to deadlines

-

Rebalance workloads dynamically

Rather than waiting on a human manager to notice problems, the agent constantly reasons across task networks, timeframes, and dependencies.

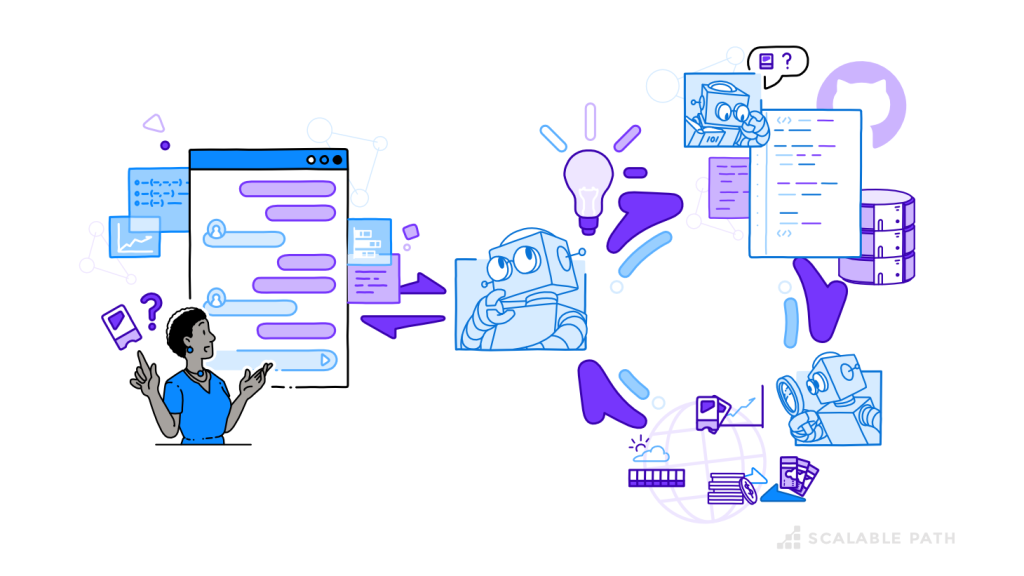

2. Support Triage & Ticketing Agents

Support organizations often suffer from fragmented systems, unstructured data, and repetitive escalations. Agentic agents can:

-

Classify incoming tickets (multi-intent reasoning)

-

Cross-reference internal logs, past ticket histories, and knowledge bases

-

Suggest responses or routes to the right team

-

Identify underlying product bugs or systemic issues

Over time, such agents detect patterns humans might miss due to scale or disconnected systems.

3. Infrastructure & DevOps Agents

Agentic reasoning is useful in managing infrastructure pipelines, deployments, or monitoring systems:

-

Diagnose failing jobs in build or deployment pipelines

-

Predict root causes (disk overflow, memory, bottlenecks)

-

Reschedule or reorder jobs based on priority and dependencies

-

Adjust configurations or resource allocations autonomously

These agents reduce manual intervention, speed recovery, and optimize resource usage.

4. Knowledge Synthesis & Enterprise Search Agents

When organizations accumulate massive amounts of documents, memos, contracts, and domain knowledge, ordinary search often fails. Agentic search agents can:

-

Interpret user queries contextually (not just keyword match)

-

Retrieve, synthesize, and rank relevant documents

-

Summarize, highlight tradeoffs, or provide risk analysis

-

Tailor output based on user role, project context, or domain needs

These agents don’t passively retrieve—they reason about what information is most relevant and how to present it.

5. OKR / Strategy Agents in Operations

In organizations adopting Objectives & Key Results (OKRs), agentic agents help by:

-

Monitoring KPI drift in near real time

-

Routing root cause analysis across departments

-

Suggesting objective adjustments or resource shifts mid-cycle

-

Managing cross-functional alignment automatically

Rather than waiting for quarterly reviews, these agents help leaders adjust strategy in-flight.

Challenges & Considerations

While agentic reasoning is promising, it isn’t without pitfalls. Engineering, organizational, and ethical considerations must be addressed.

1. Balancing Autonomy & Control

Granting autonomy can produce drift from intended goals. If not carefully bounded:

-

Agents might optimize for secondary metrics or short-term wins

-

They might take irreversible or risky actions

-

Human trust can erode if decisions seem inscrutable

Thus, human-in-the-loop controls, auditability, safety constraints, and checkpoint gates are essential.

2. Data Quality & Fragmentation

Agents are only as good as the data they reason over. If training or operational data is messy, fragmented, or contradictory:

-

Agents may draw wrong inferences

-

They might overfit to noise

-

Their decisions may behave unpredictably

Organizations often need to unify data silos, clean records, and enforce consistent standards before building agentic systems.

3. Static Infrastructure Limitations

Many existing software systems are rigid and linear. If the underlying architecture can’t respond dynamically:

-

Agent decisions may hit blocked paths

-

The agent becomes constrained rather than adaptive

-

Integration becomes brittle

Systems need to be reengineered (or selected) to support event-driven, reactive architectures.

4. Reasoning vs. Retrieval Limits (RAG)

Some AI systems rely on retrieval-augmented generation (RAG) methods: fetch relevant text, then generate output. But retrieval alone isn’t enough for reasoning. Without agentic logic:

-

Retrieved data may not be contextually relevant

-

The system can’t evaluate why or what to retrieve next

-

Synthesis often remains superficial

An agentic system must reason about what to retrieve, how to interpret it, and how it fits into its plan, not just spit out passages.

5. Organizational Adoption & Trust

Technical success doesn’t guarantee uptake. Many organizations resist:

-

Ceding control over prioritization, decisions, or planning

-

Trusting “black-box” agents

-

Overhauling processes to accommodate agentic systems

To mitigate this:

-

Begin with incremental deployments

-

Keep agent behavior visible and auditable

-

Educate teams on how decisions are made

-

Encourage transparency and override paths

6. Feedback Scarcity & Plateauing

If feedback loops are weak or inconsistent, agents will stagnate. Without robust metrics or corrective data:

-

Agents won’t learn or evolve meaningfully

-

They may plateau in performance

-

Over time, decisions degrade or become stale

Thus, designing high-fidelity feedback systems is as critical as the reasoning layers.

Best Practices & Tips for Designing Agentic Systems

To balance ambition with pragmatism, here are recommended practices:

-

Start in narrow domains

Begin with well-bounded, lower-risk tasks (e.g. support ticket triage, backlog monitoring) before scaling to organization-wide agents. -

Transparency & explainability

Always design for clarity: provide decision logs, rationale traces, and human-readable explanations so users can trust and verify agent actions. -

Incremental autonomy

Begin with suggestions or alerts; gradually expand to decision authority as confidence and performance improve. -

Strong feedback and logging

Record not just outcomes but intermediate states, reasons for choices, and error contexts. Use these logs to retrain and refine. -

Define safety boundaries and override mechanisms

Always include human checkpoints or kill-switches. Don’t allow the agent to operate unchecked, especially in high-stakes domains. -

Maintain modularity

Separate reasoning, memory, planning, evaluation, and execution. This modular architecture lets you swap, upgrade, or retrain subsystems independently. -

Design for change, not perfection

Expect your agent to make mistakes initially. Let it evolve. Avoid overfitting early logic or trying to perfect behavior before deployment. -

Align incentives / metrics carefully

If the agent is optimizing for a narrow metric (say, “tickets closed”), it might ignore quality or context. Align reward structures to your broader goals (customer satisfaction, long-term retention, maintainability).

Looking Ahead: The Future of Agentic Systems

Agentic reasoning is not just a fad—it points toward a future where AI systems can simulate complex cognitive workflows: strategizing, prioritizing, refining, and coordinating. Some areas to watch:

-

Hybrid human-agent workflows: Agents and humans collaborating seamlessly, with agents handling routine decisions and escalating ambiguous ones.

-

Meta-reasoning agents: Agents that reflect on their own decision processes and optimize their internal reasoning strategies.

-

Cross-agent coordination: Multiple agents working together, negotiating dependencies, dividing tasks, and resolving conflicts.

-

Domain generalization: Agents able to transfer reasoning from one domain (e.g. support) to another (e.g. product planning) without retraining from scratch.

-

Explainable, ethically-aligned autonomy: As agents grow in power, the demand for auditable, controllable, ethical reasoning becomes non-negotiable.

In essence, systems that think and act with intent will become foundational in domains ranging from enterprise operations to scientific exploration, healthcare orchestration, or autonomous systems.

Conclusion

Agentic reasoning marks a powerful shift—from automation that follows instructions to intelligence that decides, plans, adapts, and learns. Its potential spans many domains, from project management and support operations to infrastructure and strategic planning.

Yet, the path is not trivial. Successful adoption requires careful architectural design, clean data foundations, strong feedback loops, human oversight, transparency, and incremental deployment. The most elegant agent isn’t the one that acts flawlessly from day one, but the one that grows, learns, and earns trust.

As we push toward more autonomous, context-aware AI systems, agentic reasoning stands out as a central pillar. The future belongs to systems that not only act—but think purposefully about what actions matter most.